Pre-Mitigation vs Post-Mitigation Risk Analysis: How to Measure What Your Response Plan Is Worth

You’ve spent months building a risk register. Your team has identified every threat to the schedule and budget. But here’s the problem: your register shows risks, not answers. It lists what could go wrong—not whether your response plan actually fixes it. Without pre-mitigation vs post-mitigation risk analysis, you can’t measure the value of your mitigation strategy. You can’t justify spending $500K on risk response if you don’t know it will save you $2M. That’s where quantitative risk modeling becomes your decision-support engine.

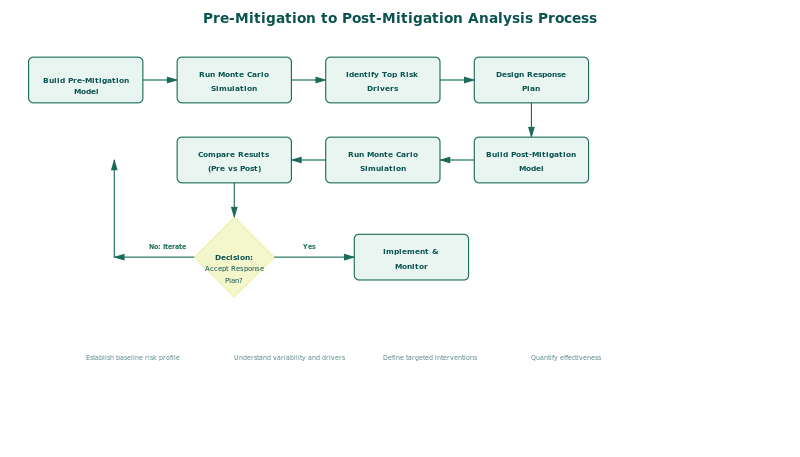

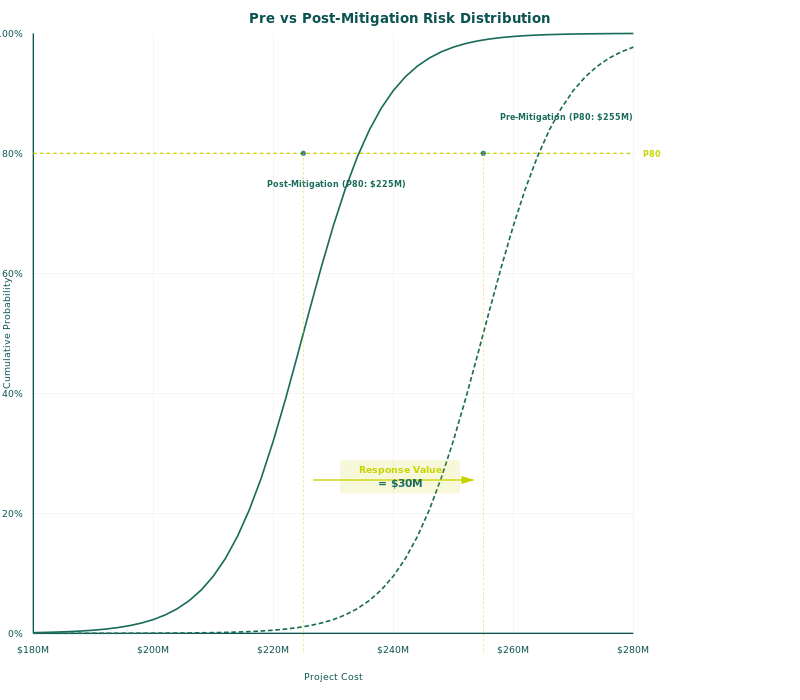

Pre-mitigation post-mitigation risk analysis is a two-model comparison that quantifies baseline risk exposure and measures how much your response plan reduces it. Run your Monte Carlo simulation twice, once with original risk parameters and once with mitigation adjustments, then calculate the delta. The result is hard numbers that prove mitigation ROI and guide funding decisions.

In mature organizations, this comparison isn’t optional. It’s the bridge between risk awareness and risk governance. It answers the question every sponsor and stakeholder asks: “What are we getting for our contingency spend?” Without it, mitigation decisions are gut calls. With it, they’re data-driven.

Here is how it works, step by step.

Why Pre-Mitigation Analysis Comes First

The pre-mitigation model is your risk baseline. It answers the question: “What happens if we do nothing?” Run your Monte Carlo simulation with all identified risks, original probability and impact parameters, and no response plan in place. The output is your exposure—the P50, P80, and P90 cost and schedule outcomes you face if mitigation never happens.

This baseline isn’t pessimistic fiction. It’s the measured result of your risk data. In Phase 5 of the QSRA (Quantitative Schedule and Risk Analysis) framework, this pre-mitigation run is mandatory. It grounds your analysis in reality: here is our actual exposure, quantified.

Think of it as a diagnostic. Before you recommend a treatment, you measure the patient’s baseline health. Without the pre-mitigation model, you have no baseline. You can’t claim your response plan saves $5M if you never quantified how much you were at risk to lose.

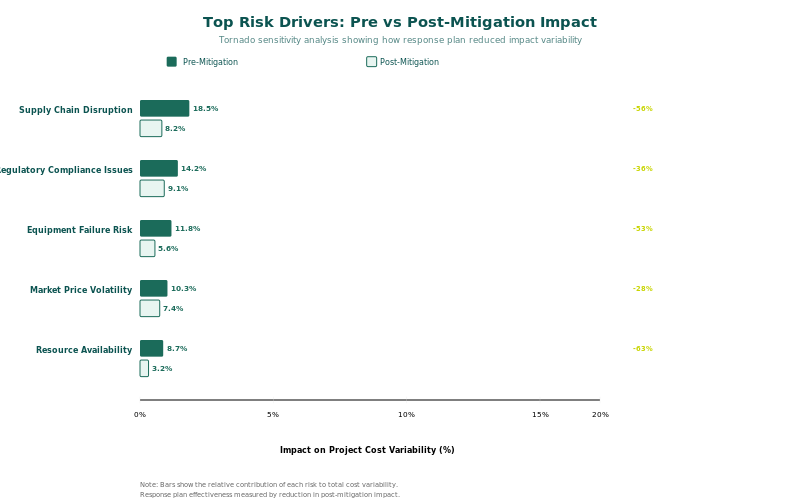

The pre-mitigation output also drives prioritization. Which risks are cost drivers? Which delays are schedule killers? Your tornado chart tells you. That data informs which risks deserve mitigation focus.

How to Build a Pre-Mitigation Risk Model

Building a quantitative pre-mitigation model requires four steps. First, populate your risk register with every identified threat. Include schedule risks (delays from supplier issues, labor shortages, design rework) and cost risks (material inflation, scope creep, contractor performance variance). Second, assign probability and impact distributions to each risk. Don’t use single-point estimates like “30% probability.” Use triangular, lognormal, or uniform distributions that reflect the true range of uncertainty. The Risk Data Engine (RDE) provides vetted historical data to calibrate these distributions.

Third, map each risk to the schedule network and cost breakdown structure. A schedule risk isn’t just a number—it’s a delay to specific tasks. A cost risk attaches to specific elements of the budget. This mapping is critical. It allows the Monte Carlo engine to compound risks across your entire plan and show correlated outcomes.

Fourth, run the Monte Carlo simulation. Industry-standard tools like Safran Risk or Oracle Primavera Risk Analysis execute 10,000+ iterations, sampling from your probability distributions and calculating finish dates and final costs. The output is a probability distribution of project outcomes—not a single number, but a range of P10, P50, P80, P90 values that represent different confidence levels.

Document this pre-mitigation baseline. Save your Monte Carlo results, tornado chart, and cumulative probability curve. You’ll use these as your comparison benchmark.

Most importantly: run this analysis before your mitigation strategy is locked in. Pre-mitigation analysis is decision input, not justification for a plan already built. It tells you what you’re actually facing.

Designing Your Response Plan for Quantitative Measurement

Many teams build response plans that can’t be measured. They list mitigation actions in prose: “Improve supplier relationships” or “Strengthen design review.” These are good practices, but they’re invisible to a quantitative model. Your post-mitigation analysis will fail if your response strategies aren’t quantifiable.

Structure every mitigation strategy around one or more of three mechanisms: reduce probability, reduce impact, or transfer the risk. A supplier diversification strategy reduces the probability of single-source supply chain disruption. An accelerated design review reduces the probability and impact of design rework. Risk transfer (insurance, performance bonds, subcontractor holdbacks) shifts impact to a third party. Each mechanism has a measurable parameter.

For each mitigation action, define: (1) which risk it addresses, (2) the parameter it affects (probability or impact), (3) the expected reduction (e.g., reduce probability from 40% to 15%), (4) the hard cost to implement it, and (5) the timeline of implementation. Document this in a mitigation matrix. Your post-mitigation model will pull these numbers directly.

Some mitigation strategies are dependencies in the schedule. If you’re hiring a specialist to reduce rework risk, that hiring must happen before the phase where rework is likely. Others are standalone cost spend. Either way, your model must capture the sequence and cost.

Running the Post-Mitigation Model

Clone your pre-mitigation model. Don’t overwrite it—you need both for comparison. In the cloned model, make three types of changes. First, adjust probability and impact parameters for risks that mitigation addresses. If your mitigation strategy reduces supplier disruption probability from 40% to 15%, change that parameter. If it reduces design rework cost impact from $800K to $200K, update the distribution.

Second, add the hard cost of mitigation to your project budget. If you’re spending $500K on supplier redundancy, that money appears as a cost line item in the post-mitigation schedule and budget. It doesn’t disappear—it’s invested upfront to reduce exposure.

Third, revise the schedule if your mitigation strategy introduces new tasks. Early design reviews, additional testing, supplier qualification—these are schedule activities that consume time and cost upfront. They belong in the post-mitigation network.

Run the post-mitigation Monte Carlo. Same number of iterations as pre-mitigation. Document the P50, P80, P90 results. Also generate a tornado chart for the post-mitigation scenario—it will show a different sensitivity profile, because risks have been adjusted.

The post-mitigation result is not the “true” outcome. It’s the modeled outcome if your mitigation strategy executes as planned. How well it matches reality depends on how accurately you’ve captured your risks and mitigation parameters. That’s why data quality and validation are essential.

Comparing Pre and Post Results — The Decision Point

Now you have two sets of numbers. This table shows what the comparison looks like:

| Metric | Pre-Mitigation | Post-Mitigation | Delta |

|---|---|---|---|

| P50 Cost | $125M | $118M | –$7M |

| P80 Cost | $142M | $131M | –$11M |

| P90 Cost | $158M | $143M | –$15M |

| P50 Schedule (days) | 1,240 | 1,198 | –42 |

| P80 Schedule (days) | 1,385 | 1,318 | –67 |

| Mitigation Investment | $0 | $3.2M | $3.2M |

| Net Benefit (P80 Cost) | — | $7.8M | ROI: 244% |

Read this table left to right. At P50 (most likely), mitigation saves $7M in cost and 42 days in schedule. At P80 (80% confidence), the benefit grows to $11M and 67 days. But mitigation costs $3.2M upfront. The net benefit at P80 is $7.8M—a 244% return on investment.

This is the number your sponsor needs. Not “we reduced risk,” but “we spend $3.2M to save $7.8M at 80% confidence.” This is quantitative decision support. It justifies the mitigation spend. It also identifies which confidence level you should design to—do you need to hit P80 or P90? That answer comes from comparing the cost of additional mitigation vs the business value of reduced exposure.

The tornado chart comparison is equally important. Compare the pre-mitigation and post-mitigation tornado charts side by side. Which risks remain as cost drivers? Your mitigation strategy may have fixed one exposure, but revealed another. The post-mitigation tornado tells you whether your response plan is working or whether you need to adjust focus.

Mitigation ROI Formula:

ROI = (Pre-Mitigation Exposure − Post-Mitigation Exposure) / Cost of Mitigation

Example: ($142M − $131M) / $3.2M = 244% ROI at P80 confidence

One critical point: the difference between pre-mitigation and post-mitigation is NOT your contingency budget. Contingency is a reserve account that covers actual, realized risks. The pre-post delta is the measured value of your proactive mitigation strategy. They’re different concepts serving different governance purposes.

Real-World Scenario: Brownfield Oil & Gas Project

A major oil & gas operator faced a brownfield expansion project: upgrade an aging facility to increase processing capacity. The baseline project was estimated at $280M over 42 months. Early risk workshops identified four major cost drivers: regulatory permitting delays (40% probability, $35M impact), equipment supplier performance variance (55% probability, $28M impact), construction labor availability (60% probability, $22M impact), and subsurface uncertainty in remediation scope (35% probability, $18M impact).

The pre-mitigation Monte Carlo analysis (10,000 iterations, lognormal distributions for all risks) produced a P50 of $310M, P80 of $335M, and P90 of $358M. Schedule risk ran 38 to 62 days of delay at those same confidence levels. The organization faced $55M of cost exposure (P80 minus baseline).

The response plan targeted all four risks. For permitting, they hired a regulatory specialist and accelerated early engagement with agencies (reduce probability from 40% to 18%, cost $1.2M). For supplier performance, they contracted with two qualified vendors instead of one and staged qualification testing (reduce impact from $28M to $12M, reduce probability from 55% to 30%, cost $2.1M). For labor, they secured a labor agreement with regional unions 6 months early (reduce probability from 60% to 25%, cost $0.8M). For subsurface uncertainty, they funded additional geotechnical investigation (reduce impact from $18M to $8M, cost $0.6M). Total mitigation spend: $4.7M.

The post-mitigation model incorporated these adjustments. The P50 dropped to $298M (actually below baseline due to reduced risk), P80 to $315M, P90 to $332M. Schedule risk improved to 12 to 38 days. Net benefit at P80: $20M savings minus $4.7M investment = $15.3M net gain. Mitigation ROI: 326%.

This example illustrates why pre-post mitigation analysis matters. The project sponsor asked, "Is $4.7M in mitigation worth it?" The pre-post analysis answered with data: "Yes. You save $20M at 80% confidence." That answer drove board approval and justified the upfront mitigation investment. Without the quantitative comparison, the conversation would have been about opinion, not evidence.

Common Mistakes That Invalidate the Comparison

Mistake 1: Updating Only Probability Without Impact. A mitigation strategy often affects both probability and impact, but teams capture only one. If your response plan reduces the likelihood of a supplier failure (probability) but doesn't address the cost of that failure (impact), the model misses the full benefit. Inspect each mitigation strategy for all affected parameters and update them all.

Mistake 2: Forgetting to Deduct Mitigation Cost. You've reduced risk from $40M to $20M exposure. Great, but you spent $8M on mitigation. If your post-mitigation model shows $20M savings but doesn't subtract the $8M investment, your ROI looks inflated. The post-mitigation budget must include every dollar of mitigation spend. It's not invisible.

Mistake 3: Assuming Mitigation Happens Instantly. A mitigation strategy has a timeline. You can't reduce design rework risk in Month 8 if your mitigation (additional review gates) doesn't launch until Month 12. Your post-mitigation schedule must show when mitigation activities occur and how they affect the network. If the activity occurs after the risk window has closed, it provides no benefit.

Mistake 4: Comparing Different Risk Assumptions. Your pre-mitigation model includes a set of identified risks. Your post-mitigation model should include the same risks, with adjusted parameters for those that mitigation addresses. Don't add new risks to the post-mitigation model that weren't in the pre-mitigation baseline. If you discover a new risk, update both models and re-run both. Otherwise you're comparing different risk universes, not the impact of mitigation.

Mistake 5: Using Single-Point Estimates for Mitigation Effectiveness. Teams often assume mitigation reduces probability from 40% to exactly 15%. Reality is more complex. Mitigation effectiveness has uncertainty. If you're hiring a specialist to reduce rework, there's still a range of outcomes. Use distributions for mitigation parameters, not point values. This reflects the true uncertainty of your response plan.

IQRM's Approach to Pre-Post Mitigation Analysis

IQRM integrates pre-post mitigation analysis into Phase 5 of the Quantitative Schedule and Risk Analysis (QSRA) framework. Phase 5 is where you move from identifying risks to quantifying response value. The workflow is systematic.

First, you run the pre-mitigation Monte Carlo baseline using risk data from your register and probability distributions calibrated with the Risk Data Engine (RDE). The RDE connects your model to historical project performance, ensuring your distributions reflect real exposure, not wishful thinking. You document P10, P50, P80, P90 outcomes and generate the tornado chart.

Second, you design quantifiable mitigation strategies. IQRM emphasizes that every response action must have a measurable input to the model: a probability reduction, an impact reduction, or a risk transfer mechanism. The mitigation matrix maps each strategy to its cost, timeline, and parameter adjustment.

Third, you clone the pre-mitigation model and build the post-mitigation scenario. IQRM practitioners use tools like Safran Risk or similar Monte Carlo platforms that allow rapid scenario comparison. The post-mitigation run uses the same structure and iterations as the baseline.

Fourth, you compare. IQRM generates side-by-side summary reports: pre-mitigation vs post-mitigation cost and schedule distributions, tornado charts, cumulative probability curves, and ROI metrics. This comparison becomes your decision support package for governance. It answers "How much is our response plan worth?" with numbers, not assertions.

The IQRM approach also emphasizes iteration. If the pre-post analysis shows that your mitigation plan doesn't deliver adequate ROI, you iterate. Strengthen response strategies, find cost-effective alternatives, or accept higher residual risk. The model is a feedback loop, not a one-time report. This is how organizations move from risk awareness to risk optimization.

Frequently Asked Questions

1. How many iterations should I run in my Monte Carlo simulation?

Industry standard is 10,000 iterations minimum for robust statistics. At 10,000 iterations, your P50 result is stable to within 1% uncertainty. Some teams run 50,000+ for very complex models with interdependent risks. The cost is computational time, not money. Run at least 10,000 to claim statistical confidence in your pre-post comparison.

2. Should I include mitigation costs in contingency?

No. Mitigation is a planned, budgeted response activity. It appears in your baseline project cost. Contingency is a reserve for unplanned, realized risk. They serve different governance purposes. Mitigation is proactive spend to reduce risk; contingency is reactive reserve to cover realized exposure. If you fold mitigation into contingency, you lose the ability to distinguish planned response from unplanned variance.

3. Can I use single-point estimates for my risk parameters?

Technically yes, but your results won't capture true uncertainty. A single-point estimate (30% probability) assumes false precision. Use triangular distributions (most likely, optimistic, pessimistic) at minimum, or lognormal/uniform distributions for better fidelity. Distributions cost no extra effort in Safran Risk or similar tools, and they produce far more credible Monte Carlo output.

4. How do I know if my mitigation parameters are realistic?

Benchmark against historical data. If you claim that a supplier qualification program reduces supplier failure probability from 50% to 12%, does that match your historical experience? The Risk Data Engine (RDE) provides vetted data on mitigation effectiveness across industries. Use it. Also validate with subject matter experts who've executed similar mitigation strategies. Avoid invented numbers.

5. What if my post-mitigation model shows negative ROI?

That's valuable feedback. It means your mitigation strategy costs more than the risk it eliminates. Options: (a) redesign mitigation to be more cost-effective, (b) focus on the highest-value risks instead of all risks, (c) accept the risk and don't mitigate. Negative ROI is not failure; it's honest analysis that prevents you from overspending on low-value mitigation.

6. How often should I update the pre-post comparison?

Quarterly at minimum if risks are evolving. Annually if the project is stable. If a major risk materializes or mitigation proves ineffective, re-run immediately. The pre-post analysis is not a one-time deliverable; it's a living decision-support tool. As your project progresses, actual outcomes inform updated parameters, and the comparison guides real-time governance.

7. What's the difference between pre-mitigation exposure and contingency?

Pre-mitigation exposure is the measured range of outcomes if you do nothing (P50, P80, P90 costs/schedules). Contingency is a reserve budget account, typically sized to a confidence level like P70 or P80. You might have $35M pre-mitigation exposure at P80, but size contingency at $20M if you're executing active mitigation. The delta ($15M) is the value of your mitigation strategy. Contingency protects against residual (post-mitigation) risk.

Pre-mitigation vs post-mitigation risk analysis is the discipline that separates risk awareness from risk governance. You can list threats in a register all day, but until you quantify what your response plan is worth, you're guessing at mitigation decisions. Build both models. Compare them. Use the delta to justify your spending and guide your response priorities. This is how mature organizations turn risk data into business advantage.

IQRM delivers specialist training and consulting in pre-mitigation and post-mitigation risk analysis, Monte Carlo simulation, and risk-based forecasting. Our QRM Diploma programme equips professionals with the practical skills to build, run, and interpret quantitative risk models on real projects.