Probability Distributions for Risk Analysis: How to Choose the Right One for Your Model

You have a risk register, a schedule, and a Monte Carlo tool ready to go. But the moment you need to assign a distribution to an activity or a risk event, the process stalls. Triangle or PERT? Uniform or Lognormal? The wrong choice does not just affect a single input; it distorts every confidence level, every contingency figure, and every decision your model is supposed to support.

Probability distributions for risk analysis are mathematical functions that define how uncertainty is sampled inside a Monte Carlo simulation. They control the range and likelihood of possible durations, costs, or frequencies across thousands of iterations, producing the S-curves and confidence levels that decision-makers rely on. Choosing the right distribution determines whether your model reflects reality or produces misleading contingency estimates.

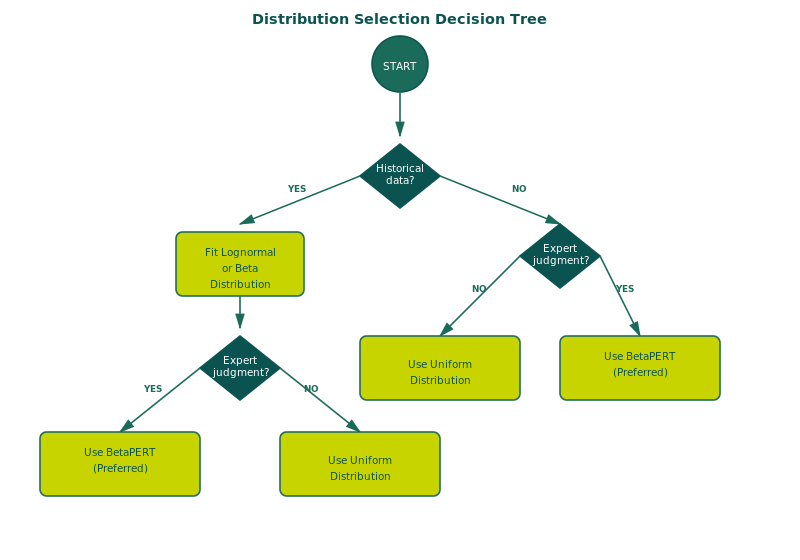

The critical insight most practitioners miss is that distribution selection should be driven by data availability, not personal preference. IQRM's data sufficiency framework provides a clear, repeatable rule: the number of historical data points you have determines which distribution you are justified in using. This removes subjectivity from the process and makes your model defensible under audit.

This guide covers every distribution used in quantitative risk analysis, when to use each one, and how IQRM's data sufficiency rules make the choice for you.

Why Distribution Choice Matters More Than Most Analysts Realise

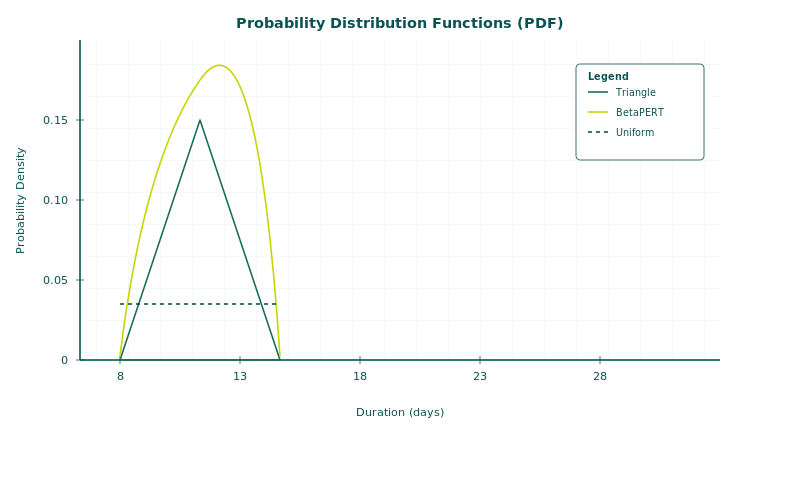

The distribution you assign to a variable determines how the Monte Carlo engine samples values during simulation. A Triangle distribution with a minimum of 10 days, most likely of 14 days, and maximum of 30 days will generate a fundamentally different spread of outcomes than a PERT distribution with the same three inputs. The Triangle will produce a sharper, more conservative result. The PERT will concentrate more samples around the most likely value. Over 10,000 iterations, that difference compounds into meaningfully different P50, P80, and P90 dates on your Schedule Risk Analysis output.

This matters because decision-makers act on those numbers. A project director who sees a P80 completion date of March 2028 will make different resource and contracting decisions than one who sees June 2028. If the gap between those two dates is caused by an arbitrary distribution choice rather than genuine uncertainty, the model has failed its purpose.

IQRM's position is direct: a risk model without a traceable data foundation is an opinion with a histogram attached. The distribution you select is the most visible place where that principle either holds or collapses.

Two Categories: Discrete vs Continuous Distributions

Every variable in a risk model falls into one of two categories, and each category uses a different family of distributions. Confusing the two is one of the most common modelling errors in practice.

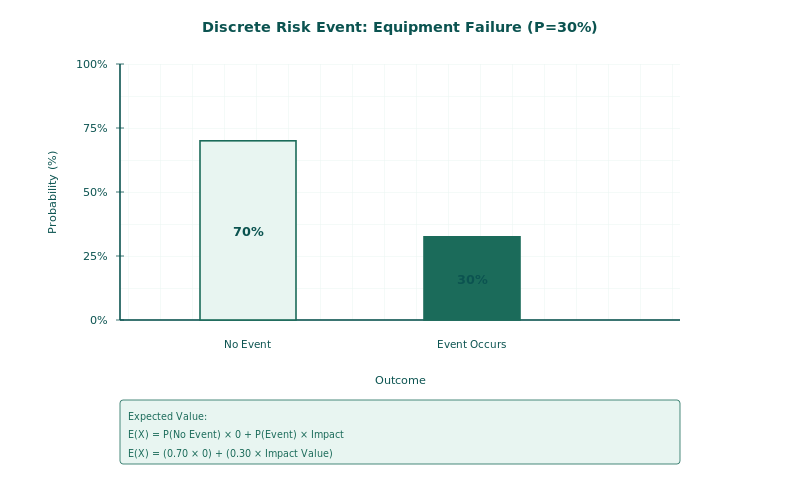

Discrete Distributions: Modelling Whether and How Often

Discrete distributions model the occurrence of risk events. They answer: does this risk happen in this iteration, and if so, how many times? These are attached to specific risk events in your risk register, not to activity durations.

Bernoulli: A single binary event that either happens or does not. Defined by one parameter: the probability of occurrence. Example: a 30% chance that a specific subcontractor fails to mobilise on time. Each iteration flips a weighted coin.

Binomial: Multiple binary events with a known, finite number of elements, each with the same probability. Example: a fleet of 10 trucks, each with a 15% chance of mechanical breakdown. The simulation models how many of the 10 actually break down in each iteration.

Poisson: A repeating event with a known average frequency over a timeframe. Defined by Lambda (the average rate). Example: heavy rainfall blocking site access 4.8 times per year. IQRM recommends the Poisson distribution specifically for calendar disruptions such as weather delays, permit suspensions, and equipment failures, because it models frequency directly rather than adjusting productivity, which avoids double-counting.

Continuous Distributions: Modelling How Much

Continuous distributions model the magnitude of impact once a risk occurs or the range of uncertainty on an activity's duration or cost. These produce the three-point estimates (minimum, most likely, maximum) or statistical parameters (mean, standard deviation) that the Monte Carlo engine samples from.

The Continuous Distributions Every Risk Analyst Must Know

Four continuous distributions cover the vast majority of risk modelling scenarios. Each one is suited to a specific level of data maturity, and IQRM's data sufficiency rules make the selection systematic rather than subjective.

Uniform Distribution: The Starting Point When Data Is Scarce

The Uniform distribution assigns equal probability to every value between a minimum and maximum. There is no most likely value, no peak, and no shape. It is sometimes called the "clueless distribution" because it reflects a state where you know the boundaries but nothing about what is likely within them. Use it during conceptual or early feasibility phases when fewer than 10 historical data points are available. It is deliberately conservative: by treating every outcome as equally probable, it widens the output range and inflates contingency, which is appropriate when genuine uncertainty about the shape of the risk exists.

Triangle Distribution: The Workhorse for Expert Judgment

The Triangle distribution uses three inputs: minimum, most likely (mode), and maximum. It creates a sharp peak at the mode and straight lines down to the extremes. It is the most widely used distribution in project risk analysis because risk workshops naturally produce three-point estimates. IQRM recommends it when you have 10 to 30 data points, enough to identify a credible most likely value but not enough to fit a statistical curve. Be aware that the Triangle overestimates risk compared to PERT because its sharp peak gives less weight to the mode, spreading more probability toward the tails. When you are uncertain between Triangle and PERT, choose Triangle for early-stage estimates where conservatism is warranted.

BetaPERT Distribution: The Smoother Alternative

The BetaPERT distribution takes the same three inputs as the Triangle (minimum, most likely, maximum) but produces a smooth, bell-shaped curve that gives significantly more weight to the most likely value. It avoids the mathematical discontinuity at the mode that the Triangle creates. The result is a less conservative contingency estimate. IQRM recommends PERT when solid historical data supports the most likely value and when the organisation has moved past early-stage estimation into execution-phase planning. In tools like Safran Risk, the PERT option is standard and should be the default choice once data maturity supports it.

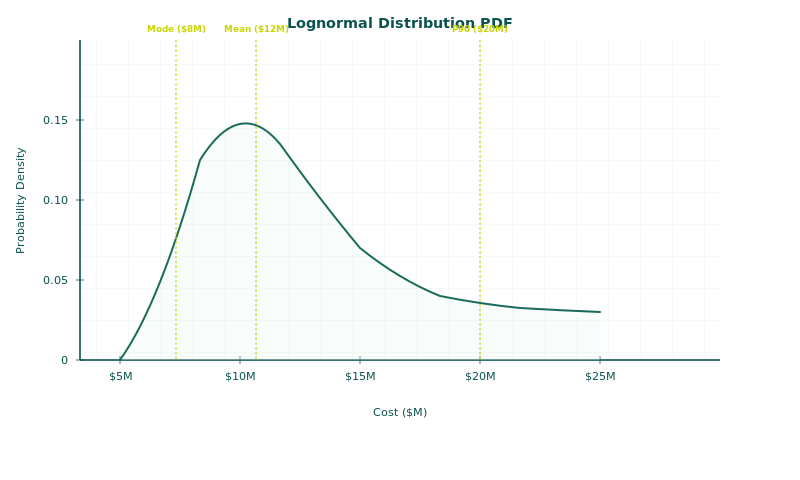

Lognormal Distribution: The Most Realistic for Mature Data

The Lognormal distribution is the most realistic representation of risk impacts in projects. It is right-skewed: most outcomes cluster near the lower end, but the tail extends far to the right, capturing the reality that things can get much worse than expected (the "fat tail" or Black Swan scenario). It is defined by two parameters, mean (Mu) and standard deviation (Sigma), expressed as relative percentages (actual/planned for durations, planned/actual for productivity). IQRM reserves the Lognormal for situations with more than 30 historical data points, typically sourced through the Risk Data Engine (RDE) methodology. The Normal distribution is explicitly rejected by IQRM for risk impacts because it can generate impossible negative values for durations and costs.

IQRM's Data Sufficiency Framework: Let the Data Choose

The single most important principle in distribution selection is this: never use a distribution more precise than your data justifies. A Lognormal fitted to five data points is statistically indefensible. A Uniform applied to 50 data points wastes information. IQRM's data sufficiency rules eliminate this guesswork entirely.

IQRM Data Sufficiency Rules:

Fewer than 10 data points → Uniform (Min, Max)

10 to 30 data points → Triangle (Min, Mode, Max)

More than 30 data points → Lognormal (Mu, Sigma as relative %)

These thresholds are derived from statistical sampling theory. Below 10 data points, you cannot credibly identify a mode. Between 10 and 30, a mode becomes identifiable but the dataset is too small to fit a parametric curve. Above 30, the Central Limit Theorem begins to apply and parametric fitting (using tools like ModelRisk's AIC/SIC criteria) becomes statistically valid.

The practical effect is powerful. When a client challenges your distribution choice in a gate review or audit, you can point to the data count and the rule. The conversation shifts from "why did you pick that distribution?" to "here are the 35 data points from our Risk Data Engine that justify this Lognormal fit." That is a conversation you win every time.

Distribution Comparison: Triangle vs PERT vs Lognormal

The three continuous distributions most commonly confused in practice are Triangle, PERT, and Lognormal. The table below summarises their characteristics, inputs, and the situations where each is appropriate according to IQRM's methodology.

| Feature | Triangle | BetaPERT | Lognormal |

|---|---|---|---|

| Inputs | Min, Mode, Max | Min, Mode, Max | Mu (mean %), Sigma (std dev %) |

| Shape | Sharp peak, straight edges | Smooth bell curve | Right-skewed with fat tail |

| Weight on Mode | Less weight (conservative) | 4x weight on mode | N/A (parametric) |

| Conservatism | Higher contingency | Moderate contingency | Captures extreme tail risk |

| Data Threshold | 10-30 data points | 10-30+ with strong mode | 30+ data points |

| Best For | Early-stage, workshops | Execution-phase planning | Mature RDE programmes |

The key takeaway from this comparison is that the same three-point estimate (say, 10/14/30 days) will produce different P80 results depending on whether you assign it a Triangle or a PERT distribution. Triangle will push the P80 further out because it spreads more probability mass toward the extremes. PERT will pull it closer to the mode. Neither is "wrong"; the question is which one your data justifies.

Real-World Scenario: Choosing Distributions on an EPC Project

Consider a brownfield EPC project in the GCC region with a 36-month schedule. The risk analyst is building a QSRA model in Safran Risk and needs to assign distributions to three categories of uncertainty.

For civil and structural activities, the contractor has completed seven similar scopes in the past decade but only five have clean duration records. With fewer than 10 data points, the analyst assigns Uniform distributions: minimum 85% of planned duration, maximum 140% of planned duration. This is deliberately wide, but it honestly reflects the data limitation.

For piping fabrication and installation, the organisation's Risk Data Engine contains 22 completed work packages from three prior projects. The analyst has enough data to identify a credible most likely value, so Triangle distributions are assigned: minimum 90%, most likely 105%, maximum 160% of planned duration. The Triangle's conservatism is appropriate for a phase that historically shows high variability.

For equipment delivery, procurement records from the past five years provide 45 lead-time data points across similar equipment categories. The analyst fits a Lognormal distribution using ModelRisk's AIC criterion, yielding Mu = 1.08 and Sigma = 0.22 (as relative percentages of planned lead time). This captures the right-skewed reality that most deliveries arrive close to plan, but a meaningful minority arrive very late.

For weather disruptions, the project's meteorological data shows an average of 6.2 rain days per month during the summer season. The analyst models this as a Poisson distribution with Lambda = 6.2, applied as calendar risk events rather than productivity adjustments.

Each distribution choice traces back to a specific data count and a specific rule. When the project director asks "why does the model show a 14-week gap between P50 and P80?", the analyst can walk through the data foundation for each distribution rather than offering vague appeals to expert judgment.

Common Distribution Mistakes and How to Avoid Them

Distribution selection errors are among the most frequent causes of unreliable risk model outputs. IQRM's consulting experience across oil and gas, infrastructure, and EPC projects reveals several recurring patterns.

Using Triangle everywhere by default. Many analysts assign Triangle distributions to every variable regardless of data availability. This overestimates contingency for well-understood activities (where PERT or Lognormal would be more appropriate) and underestimates it for poorly understood activities (where Uniform would be more honest).

Using the Normal distribution for risk impacts. The Normal (Gaussian) distribution is symmetric and can generate negative values. For project durations and costs, negative values are physically impossible. IQRM explicitly rejects the Normal distribution for risk modelling. If you need a parametric fit for 30+ data points, use Lognormal.

Setting symmetrical three-point estimates. When the minimum and maximum are equidistant from the mode (e.g., 10/15/20), the distribution implies that finishing early is just as likely as finishing late. On projects, this is almost never true. Upside is limited; downside is unbounded. Always check that your maximum-to-mode distance is larger than your mode-to-minimum distance.

Modelling frequency risks as productivity adjustments. Weather delays and operational shutdowns should be modelled using Poisson-based calendar risks, not by reducing labour productivity. The latter double-counts the impact if you also have duration uncertainty on the same activities. IQRM recommends keeping frequency and magnitude separate using the appropriate discrete and continuous distributions respectively.

Ignoring correlation between distributions. Even perfectly chosen distributions will understate total project risk if the variables are assumed to be independent when they are not. If one trade performs poorly, related trades are likely to perform poorly too. Always pair your distribution selection with a defensible correlation matrix.

Automated Distribution Fitting for Large Datasets

When your Risk Data Engine produces large datasets (50+ data points for a given work package type), manual distribution selection becomes unnecessary. Statistical fitting tools can test the data against every available distribution family and identify the best mathematical fit automatically.

The two primary fitting criteria are the AIC (Akaike Information Criterion) and the SIC (Schwarz Information Criterion). Both rank candidate distributions by how well they match the observed data, penalising overly complex models. In practice, tools like ModelRisk perform this analysis in seconds, returning a ranked list of distributions with their fitted parameters.

This is where the data sufficiency framework meets the Risk Data Engine. Organisations that invest in systematic data collection through the RDE methodology (defining key risk parameters, setting collection frequencies, capturing data in structured databases, and fitting distributions statistically) eventually move beyond three-point estimates entirely. Their models use empirically derived distributions that no reviewer can credibly challenge, because the data speaks for itself.

Frequently Asked Questions

What is a probability distribution in risk analysis?

A probability distribution is a mathematical function that defines the range and likelihood of possible values a variable can take during a Monte Carlo simulation. It translates uncertainty into a shape that the simulation engine samples from across thousands of iterations to produce confidence levels and contingency estimates.

How do you choose between Triangle and PERT distributions?

Use Triangle when your data is limited (10 to 30 data points) and you want a conservative result. Use PERT when solid historical data supports the most likely value and you are in execution-phase planning. Both take the same three inputs, but PERT gives four times the weight to the mode, producing a less conservative contingency estimate.

Why does IQRM reject the Normal distribution for risk modelling?

The Normal distribution is symmetric and can generate negative values, which are physically impossible for project durations and costs. Risk impacts on projects are almost always right-skewed: the downside is larger than the upside. The Lognormal distribution captures this reality without producing impossible results.

What is the data sufficiency rule for distribution selection?

IQRM's data sufficiency rules match distribution precision to data availability: fewer than 10 data points warrants Uniform, 10 to 30 warrants Triangle, and more than 30 warrants Lognormal. This prevents analysts from using overly precise distributions that their data cannot support.

When should I use the Poisson distribution in schedule risk analysis?

Use Poisson for recurring disruptions with a known average frequency, such as weather delays, equipment breakdowns, or permit suspensions. Model these as calendar risk events rather than productivity adjustments to avoid double-counting the impact when duration uncertainty is already assigned to the same activities.

What is the difference between discrete and continuous distributions in risk analysis?

Discrete distributions model whether and how often a risk event occurs (Bernoulli, Binomial, Poisson). Continuous distributions model how much impact the event causes once it occurs (Uniform, Triangle, PERT, Lognormal). Every risk event in a quantitative model needs both: a discrete distribution for occurrence and a continuous distribution for impact.

Can I use different distributions for different activities in the same model?

Yes, and you should. Different activities have different levels of data maturity. Using Uniform for poorly understood scopes and Lognormal for well-documented ones within the same model is not inconsistent; it is honest. The data sufficiency framework applies per variable, not per model.

The distribution you select is the single most auditable decision in your risk model. Get it right by letting the data decide, and your model becomes defensible. Get it wrong by defaulting to habit, and every output downstream is compromised. IQRM's data sufficiency framework exists to make this decision systematic, traceable, and repeatable, turning distribution selection from a point of vulnerability into a point of strength.

IQRM delivers specialist training and consulting in probability distribution selection, Monte Carlo simulation, and data-driven risk modelling. Our QRM Diploma programme equips professionals with the practical skills to select, justify, and defend distribution choices on real projects, from workshop-based three-point estimates through to automated statistical fitting using the Risk Data Engine.